Features

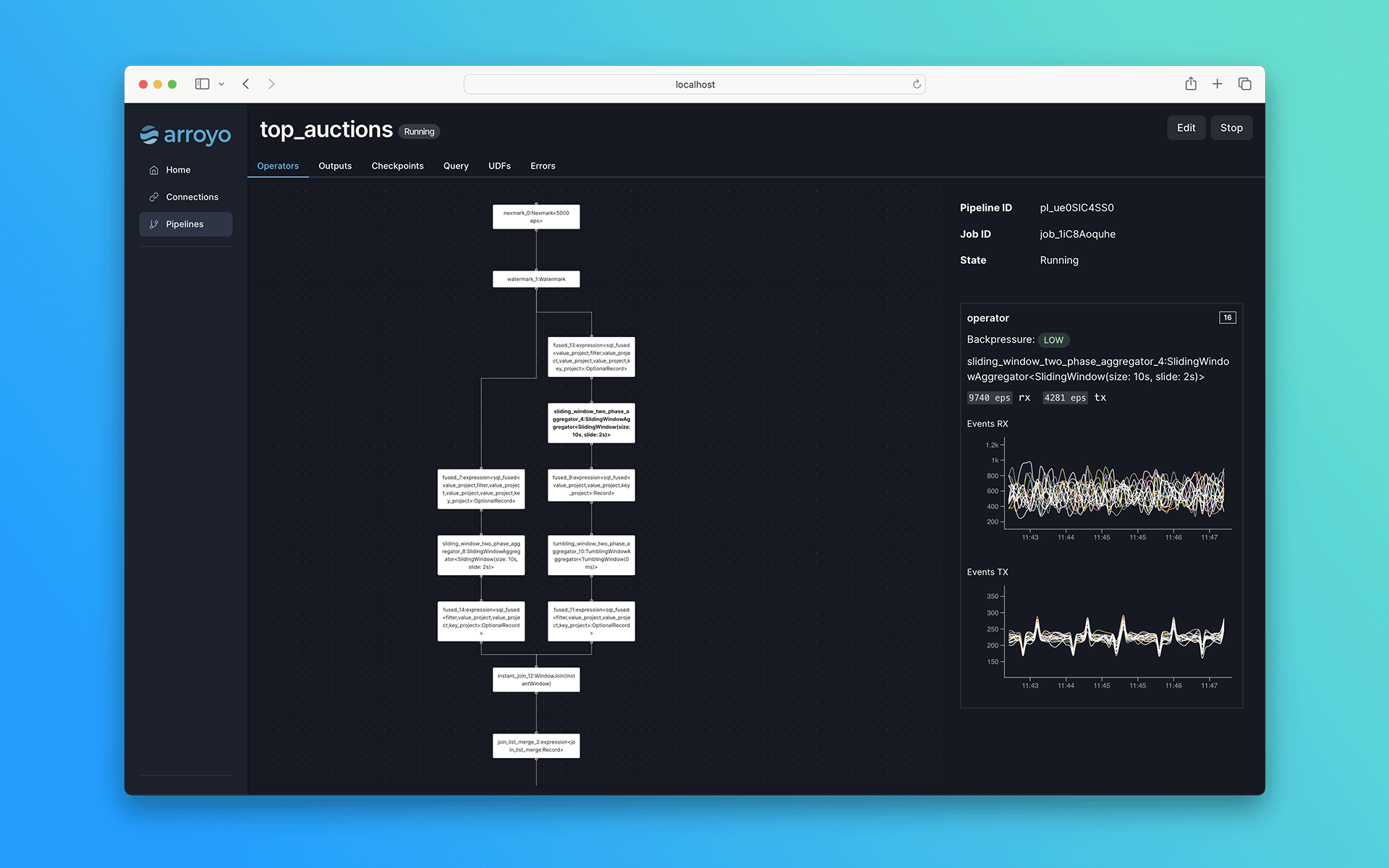

- Pipelines defined in SQL, with support for complex analytical queries

- Scales up to millions of events per second

- Stateful operations like windows and joins

- State checkpointing for fault-tolerance and pipeline recovery

- Event time processing with watermark support

Use cases

Some example use cases include:- Detecting fraud and security incidents

- Real-time product and business analytics

- Real-time ingestion into your data warehouse or data lake

- Real-time ML feature generation

Why Arroyo

There are already a number of existing streaming engines out there, including Apache Flink, Spark Streaming, and Kafka Streams. Why create a new one?- Serverless operations: Arroyo pipelines are designed to run in modern cloud environments, supporting seamless scaling, recovery, and rescheduling

- High performance SQL: SQL is a first-class concern, with consistently excellent performance

- Designed for everyone: Arroyo cleanly separates the pipeline APIs from its internal implementation. You don’t need to be a streaming expert to build real-time data pipelines.

Getting Started

Arroyo ships as a single binary, which can be easily installed locally or run in a container.In production

Arroyo supports several deployment targets for production use, including native support for Kubernetes. See the deployment docs for more information.License

Arroyo is fully open-source under the Apache 2.0 license.Support

Commerical support

Commercial support is offered by Arroyo Systems, the creators of Arroyo. Reach out to [email protected] to get in touch.Community support

Community support is offered via the Arroyo Discord where the Arroyo development team and community are actively engaged in helping users get started and solve their probelms with Arroyo.Telemetry

By default, Arroyo collects limited and anonymous usage data to help us understand how the system is being used and to help prioritize future development. You can opt out of telemetry by settingDISABLE_TELEMETRY=true when running

Arroyo services.